Dataset for Gait Mode Recognition on Activities of Daily Living

The engineering application concerns the challenging task of recognizing locomotion activities of the wearer in real-time, which is needed to ensure appropriate control of the robot during daily living activities. This dataset was collected to predict future sequences of activities from a given sequence of daily living activities of a subjective wearing a lower limb exoskeleton by using a deep-learning-based algorithm. Hence, this dataset contains not kinematic features used for the advanced deep-learning-based algorithm to get high accuracy classifier but also raw data such as accelerometer, angular late, and quaternions of both foot.

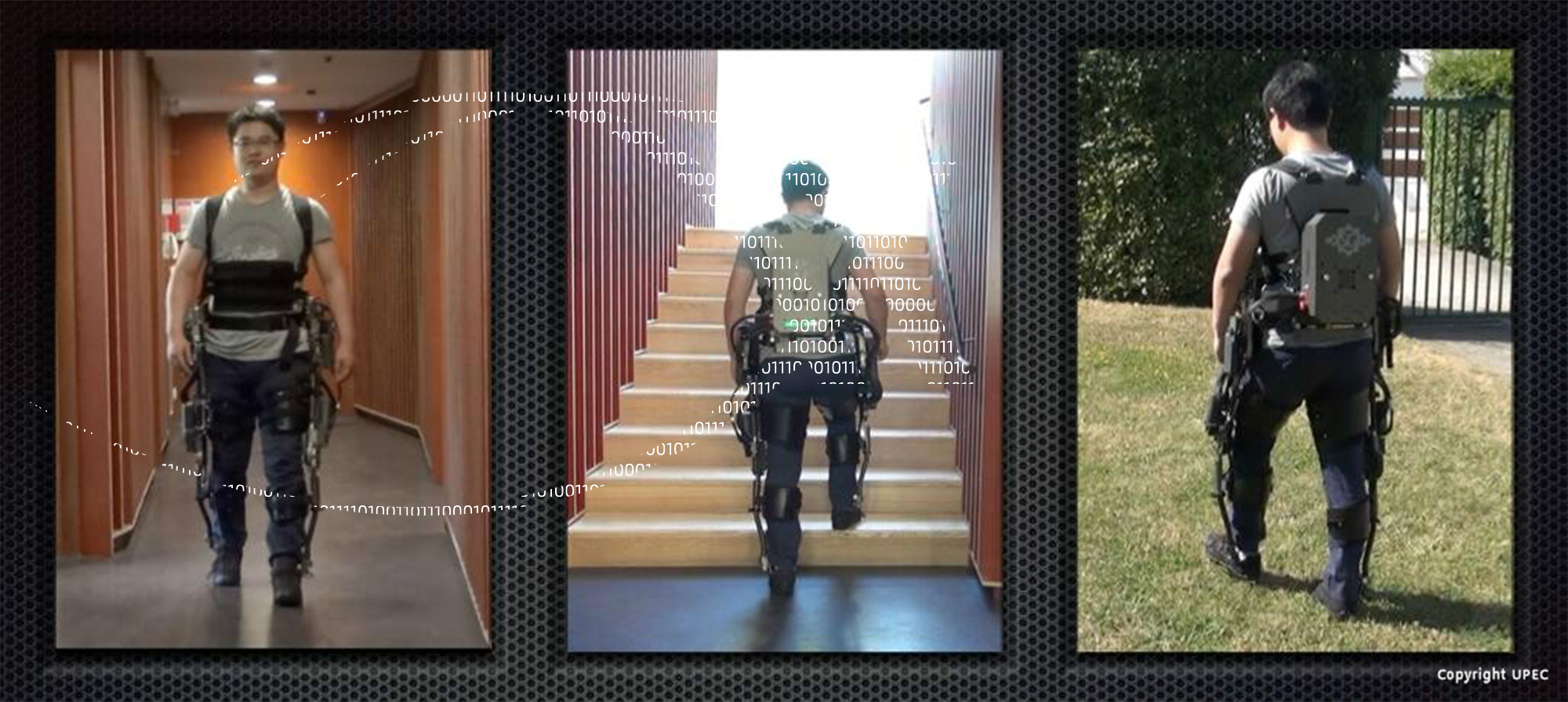

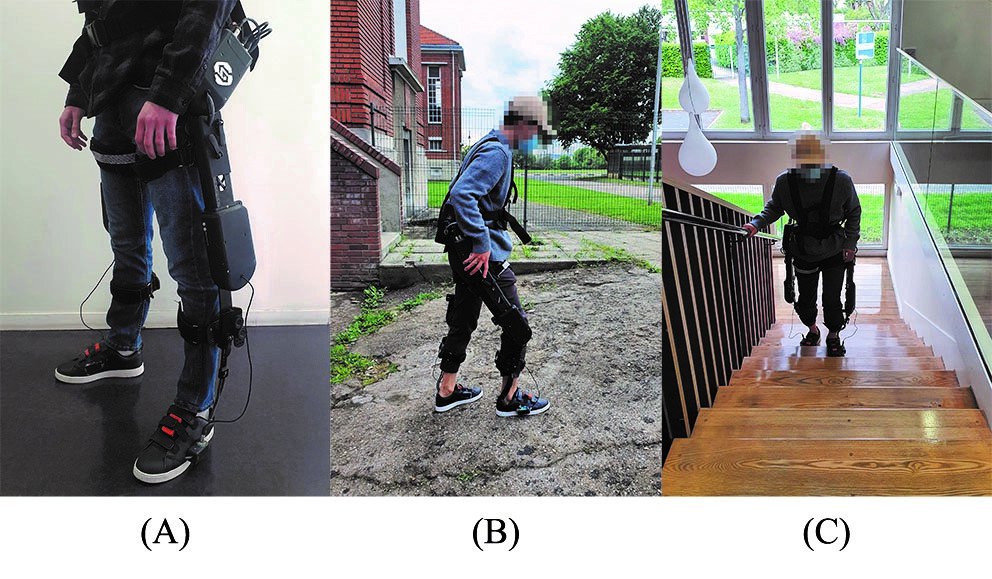

Experiment protocol: Ten healthy subjects (gender: 9 males, 1 female; age: 28±4 years; height: 1.74±0.05 m; weight: 66.8±10 kg) have participated in this study. Nine subject’s data are used for the learning process and the tenth subject data are used for the evaluation process. The experiments are conducted by each subject wearing the lower-limbs exoskeleton and performing five different gait modes: level walking (LW), ramp up (RU), ramp down (RD), stair up (SU), and stair down (SD) as shown in Fig. 1. During the data acquisition, the exoskeleton is in a passive mode, the segment lengths of the robot (shank and thigh) are adjusted to fit the subject’s height and to avoid misalignment between human and robot joints.

Experimental Device: To record the accelerations, velocities, and orientations of the subject’s feet while wearing the exoskeleton, two IMus (MTw Awinda, Xsens, Netherland) on both shoes (see Fig. 1 (A)) are used. The data are acquired from two IMUs using a 100 Hz sampling frequency on the development software (LABVIEW 2019, National Instruments, US).

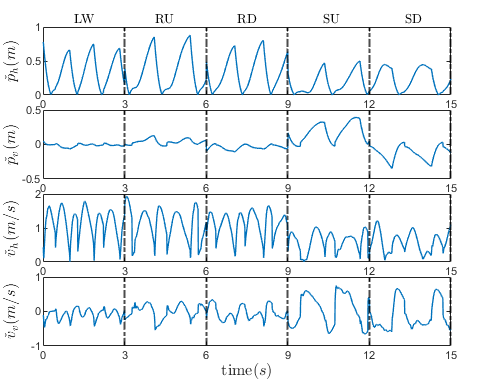

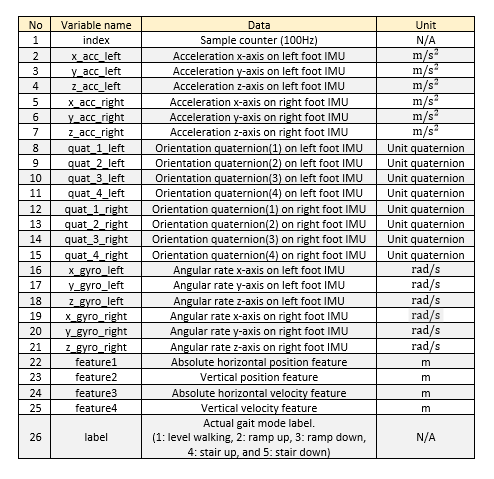

Feature extraction: The feature extraction process is needed to calculate representative kinematic features from raw data such as acceleration, quaternions, and gyroscope data. To process the features, reference and non-reference foot are defined based on the detection of swing and stance foot respectively. During the double stance phase, the prior stance foot is designed as the reference foot. The processed features consist of the absolute horizontal position (p ̌_h), vertical position (p ̌_v), absolute horizontal velocity (v ̌_h), and vertical velocity (v ̌_v) of the non-reference foot.

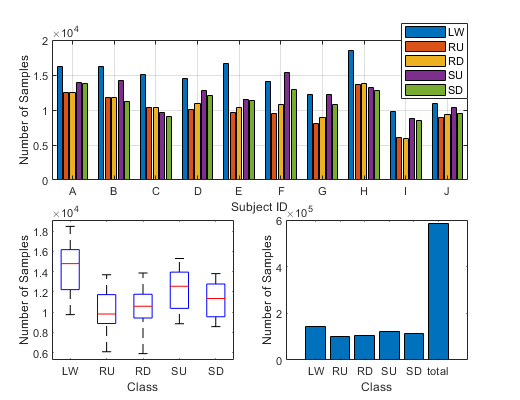

Figure 3 represents the statistical analysis of the dataset per subject and per gait mode class. The dataset folder contains each subject by “.csv” format files and it is constructed with the 26 variables as shown in Table 1.

You can download the dataset using the following link: subject_dataset.zip