Dataset for Recognition and Reasoning on Activities of Daily Living

This dataset was collected as part of the research activity of the SIRIUS team on human activity recognition and behaviour analysis to implement context aware ambient assisted living robotic systems. This dataset is composed of replay of the most common activities of the daily living that can occur in a typical day at the main rooms of any apartment. The dataset is used for developing new methodologies for machine learning and reasoning in order to enable any agent with the capability to recognize automatically the activity that can happen in normal contexts as well activities that can happen in abnormal contexts, which may be just exceptional with no criticality or dangerous to human to safety.

The activities that can happen in abnormal contexts, which reflects the situation that may face most of the elderly persons living independently. These situations are considered as anomalies in the context of an activity which can happen from time to time in the course of the daily life of an elderly. Detecting the abnormal context, will enable an assistive agent to prevent that these contexts happens or react in order to protect the elderly inhabitant. In this dataset, are considered two kinds of abnormal contexts: (i) Memory leak that corresponds to forgetting a mandatory action such as forgetting to remove the pan from the hotplate after eating or Forgetting the drugs). (ii) Executing activities in non-suitable temporal and spatial context such as preparing breakfast instead of dinner (after a nap and after a snack) or sleeping just after waking up, do not take necessary time to take the meal.

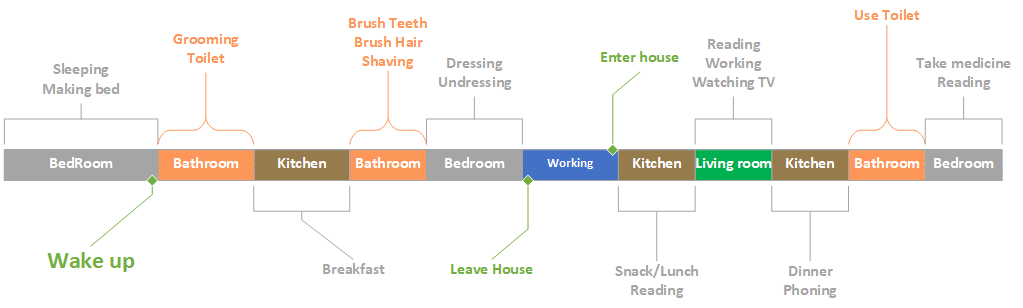

To ensure a maximum of realism in the daily activities, the experimental protocol takes into account the following scenarios:

• Interleaved activities (C): an activity B begins before the end of the previous activity A without stopping or interrupting it, the latter ending before the end of activity B.

• Interrupted activities (I): an activity B begins in the middle of the progress of another activity A, when the faith is over, the subject resumes the interrupted activity, A.

• Sequenced activities (S): sequential activities, one after the other, without one of them interrupting the other.

• Concurrent activities (P): Two activities, A and B, start at the same time and are performed independently of each other.

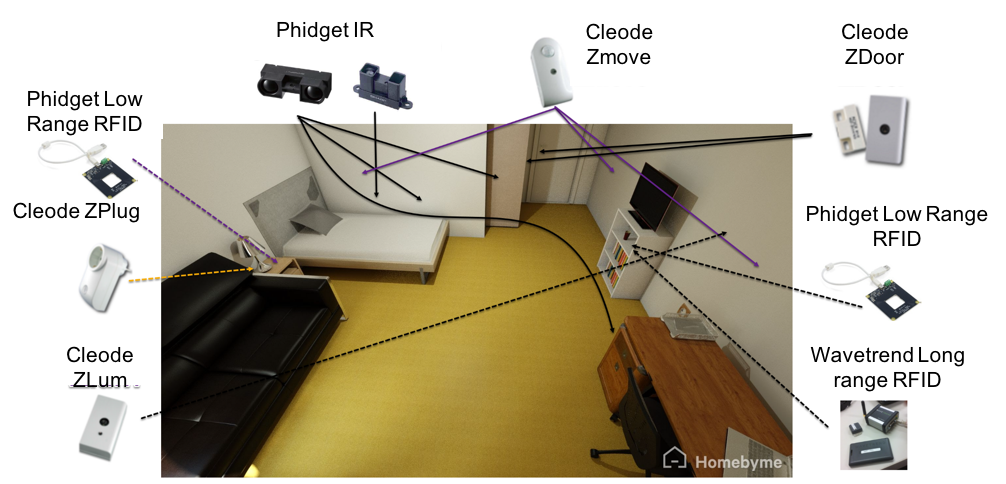

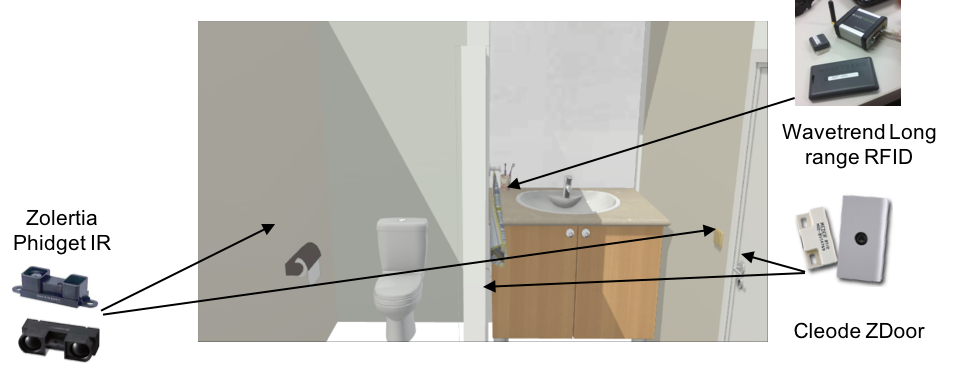

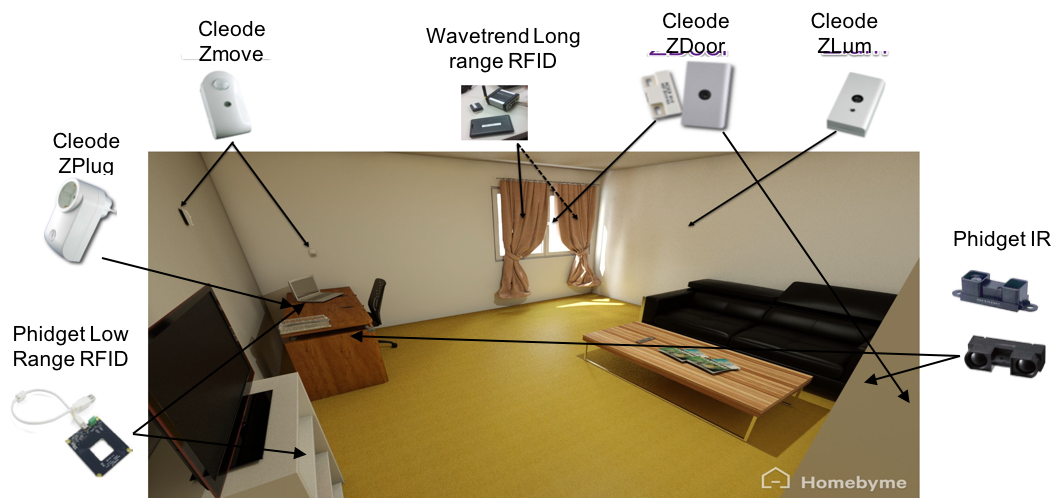

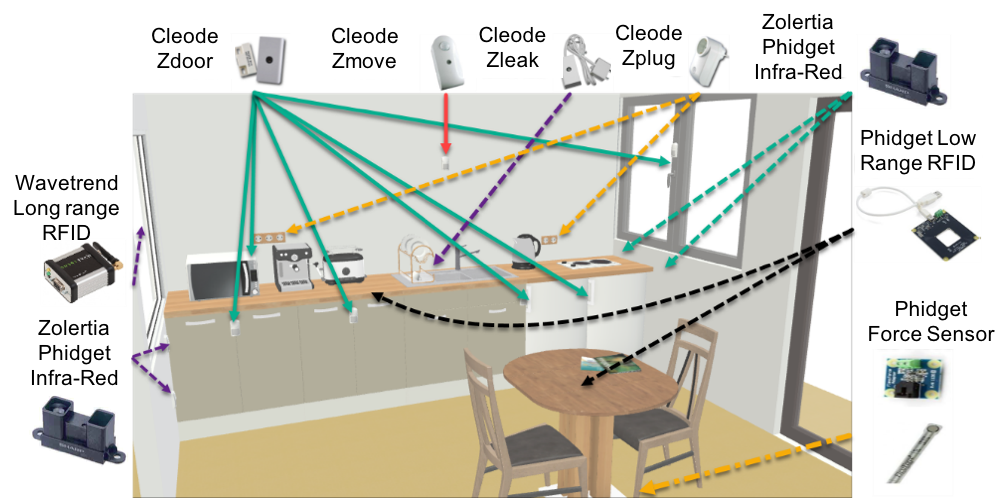

The dataset collection environment is setup in the LISSI laboratory premises. It is composed of four main spaces corresponding to a bedroom (Figure 2.A), bathroom (Figure 2.B), living room (Figure 2.C) and kitchen (Figure 2.D). In these spaces, we have deployed the wireless sensors and software systems of the ubistruct platform that are necessary to capture the daily living activities ( see http://www.plateforme-ubistruct). The deployed infrastructure is running with standard IoT protocol such as Bluetooth, Wifi, 6lowpan and Zigbee. The sensors are synchronized over the internet and transmit the sensed data online by using the XMPP and HTTP (REST) protocols.

The bedroom has a surface of 12 m², it contains basic furniture that we can find any bedroom such as a bed, a night table, a night light, a closet and a shelf as well as desk. This room also has a curtained window. In this dataset, the high-level activities are limited to sleeping, changing clothes, making the bed, drinking water, taking drugs, reading a book and making calls with the phone. The low-level activities are: Open/closing the curtains/window/door/cupboard, switching on/off the spot-light, lying, walking, getting up and sitting.

The bathroom is composed of two sub-spaces; toilet and cleaning. The inhabitant is supposed to do a variety of high level activities in both sub-spaces of the bathroom freely and without any predefined scenario. The bathroom cleaning sub-space is mainly used for washing the face and the hands that can happen at any time of the day, but also for brushing hair, shaving brushing teeth, which are common to happen before sleeping or after waking up. The low-level activities are open/close the door, flush the toilet, Open/Close the tap, Take or Pose an object, etc.

The living room space is a rearrangement of the bedroom space, which has a surface of 12 m². Two level of activities are considered in this context. The high-level activities are (i) relaxing and leisure activities such as watching television, reading a book, etc (ii) social activities such as speaking to friends with mobile phone or social apps, (iii) receiving guests and also working from time to time. Eating in the living room is an exceptional activity. Therefore, only taking a snack is considered in this experiment. The corresponding low-level activities corresponds to following actions: waking, lying on the sofa, Sitting on chair, getting up, Open/close the curtains/doors/windows, use the remote control of the television, etc.

The kitchen space contains more or less the same sensors, except the force sensors that are used to capture, which is the chair that is used by the inhabitant. The high-level activities captured in this space are cooking, cleaning and taking meals and medications. The cooking activity for each day includes several mid-level activities from the following list: prepare the milk, prepare cereal, prepare a salad, prepare an accompaniment of the main course, prepare a fruit soft drink, prepare a coffee, Prepare tea, Heat a meal already prepared, take the drugs, etc.

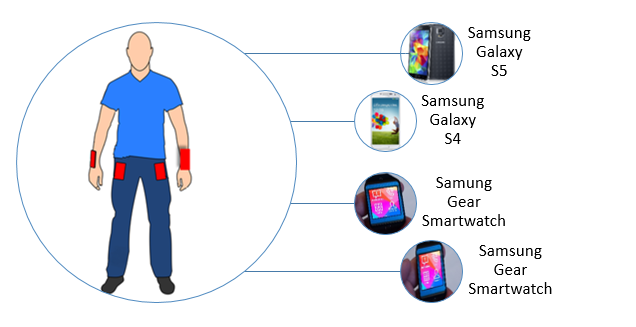

To capture human motions and physical actions, the inhabitant must wear during the experiments two smart-watches and two smart phones, see figure 3. The latter are used to assure the continuous acquisition of the 3D acceleration data from embedded sensors of these wearable devices.

To ensure richness of data-set, each subject must repeat a series of actions several times. These actions compose the different high-level activities such. The considered actions are: Open/closing the doors of rooms, the windows and also the doors of the furniture’s, pouring the drink into a glass, stir a liquid with a tea spoon, Mix ingredients in a dish, etc.