O-Smart NKRL based Emotions Recognition

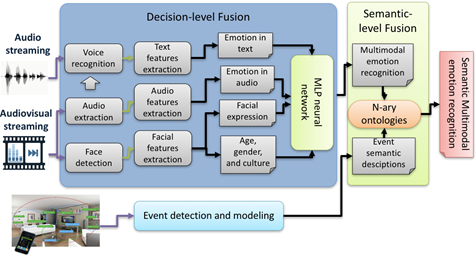

This demo presents a prototype of a smart system that can be implemented in ubiquitous robots to endow them with capability of making online multimodal emotion recognition and taking into account the context of that. The context includes relevant attributes such as Human’s eye gaze, brows, lips, and face muscles motions and positions, which can be used as good features for recognising the facial expression. In addition, focusing on words used, their syntactic structure, their meaning, and the manner with which they are produced via the intensity and quality of the voice appears as a good features vector for recognising emotions on speech. As emotion is defined as an immediate reaction following an event, it is important to take into account what is happening around the human. In this work, speech and audio-visual modalities are taken into account for the expressiveness of the information that they contain. This contextual information are relevant to the multimodal emotion recognition at the low level. Many features influence the emotional meaning. In this work, three features are extracted from audio-visual data such as age, gender, and culture that are considered as a vector of "emotion analysis" features. These latter improve emotion recognition.

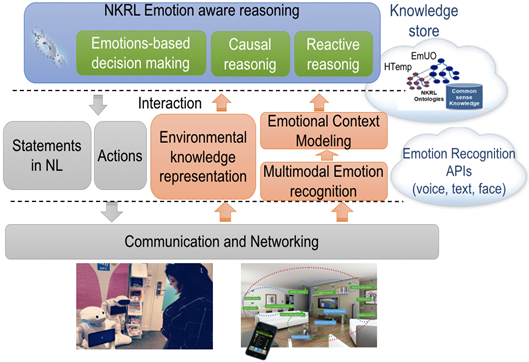

To endow ubiquitous robots with sufficient cognitive capabilities to do an accurate emotion recognition, an hybrid reasoning model for emotion recognition is proposed in this work. This model is based on hybrid fusion techniques that combine information of multiple modalities at different levels. In this paper, hybrid fusion consists of combining, at the low level, features with decisions fusion techniques based on MLP neural network. At the high level, the result of these techniques is exploited by the proposed semantic representation and reasoning models based on NKRL. The architecture of the proposed multimodal emotion recognition system is shown in the following figure.

References:

N. Ayari, H. Abdelkawy, A. Chibani, and Y. Amirat, "Towards Semantic Multimodal Emotion Recognition for Enhancing Assistive Services in Ubiquitous Robotics," in Proc. Of the AAAI 2017 Fall Symposium Series, Arlington, United States, Nov. 2017, pp. 2-9.