Deep Learning and Ontology based Probabilistic Reasoning for Recognising Affordances and Intention

This is a prototype of a smart system that can be implemented in ubiquitous robots to endow them with capability of making online recognition of objects affordances and infer user's intention. In fact visual intelligence is one of the most important aspects of human cognition, and the paramount goal of visual intelligence is the contextual visual reasoning. Take a cup as an example. From a single image, humans can infer its name, texture, color, and what actions the object affords. The smart system uses a semantic knowledge representation model where the notion of contextual object affordance is modeled as the relationship between an object and a set of actions this object allows in a given situation. In other words, objects might afford different actions at different places, times, or situations. The smart system uses contextual object affordances as a means to filter the possible actions that a companion robot can monitor/do in an ambient assisted living environment. Besides, contextual affordances can be used as part of a bigger process to extract an agent’s (humans or robots) intentions, by restricting the possible intentions based on the affordable actions in the environment in a given time.

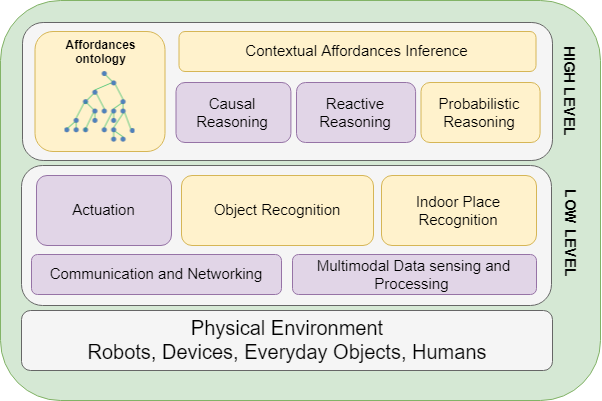

The architecture of the smart system is composed of modules that can predict the contextual object affordances based on place information, can make classification and semantic reasoning for inferring the user's intention, thanks to the combination of Deep Convolutional Networks (CNNs) and Probabilistic Description Logics (DL). The role of the probabilistic DL reasoning is to provide the ability to produce contextual affordances based on low-level object and place information.

This prototype developed in the Emospaces european project is still under tests and a video demo will be made available soon.

References:

H. Abdelkawy, S. Fiorini, A. Chibani, N. Ayari, and Y. Amirat, "Deep CNN and Probabilistic DL Reasoning for Contextual Affordances," in Proc. of the AAAI 2018 Fall Symposium Series, Arlington, United States, Oct. 2018. .