O-Smart NKRL

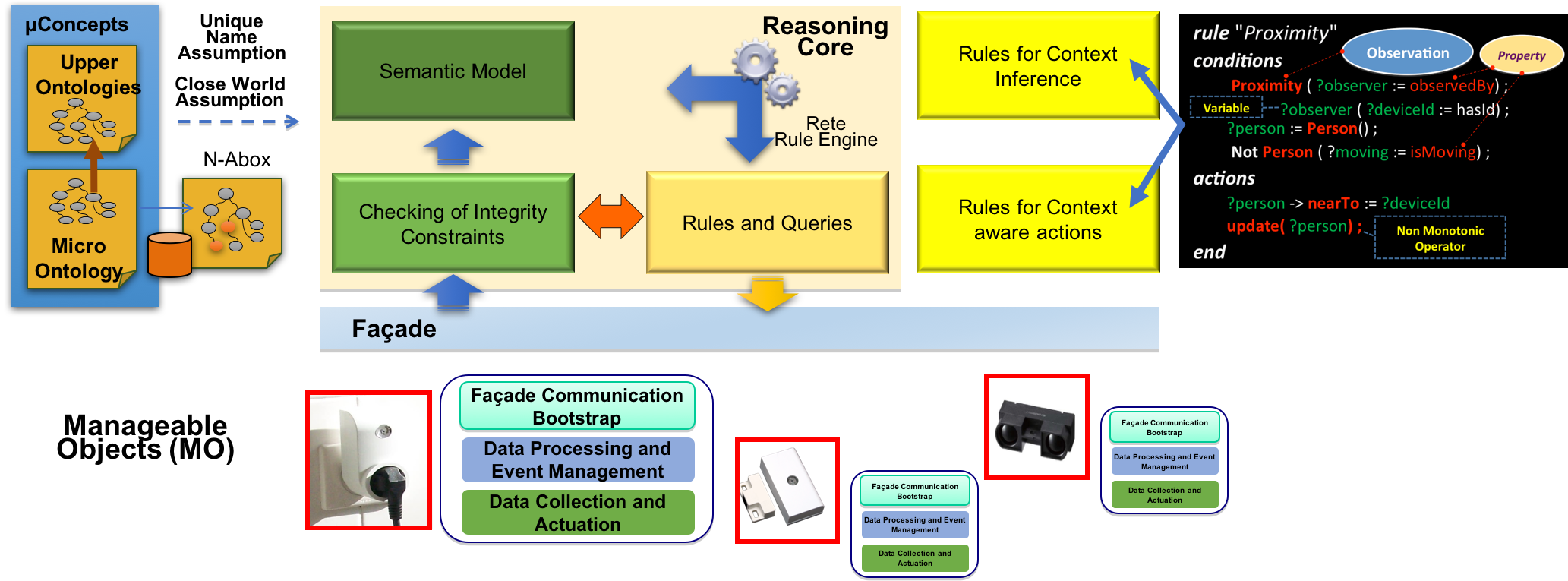

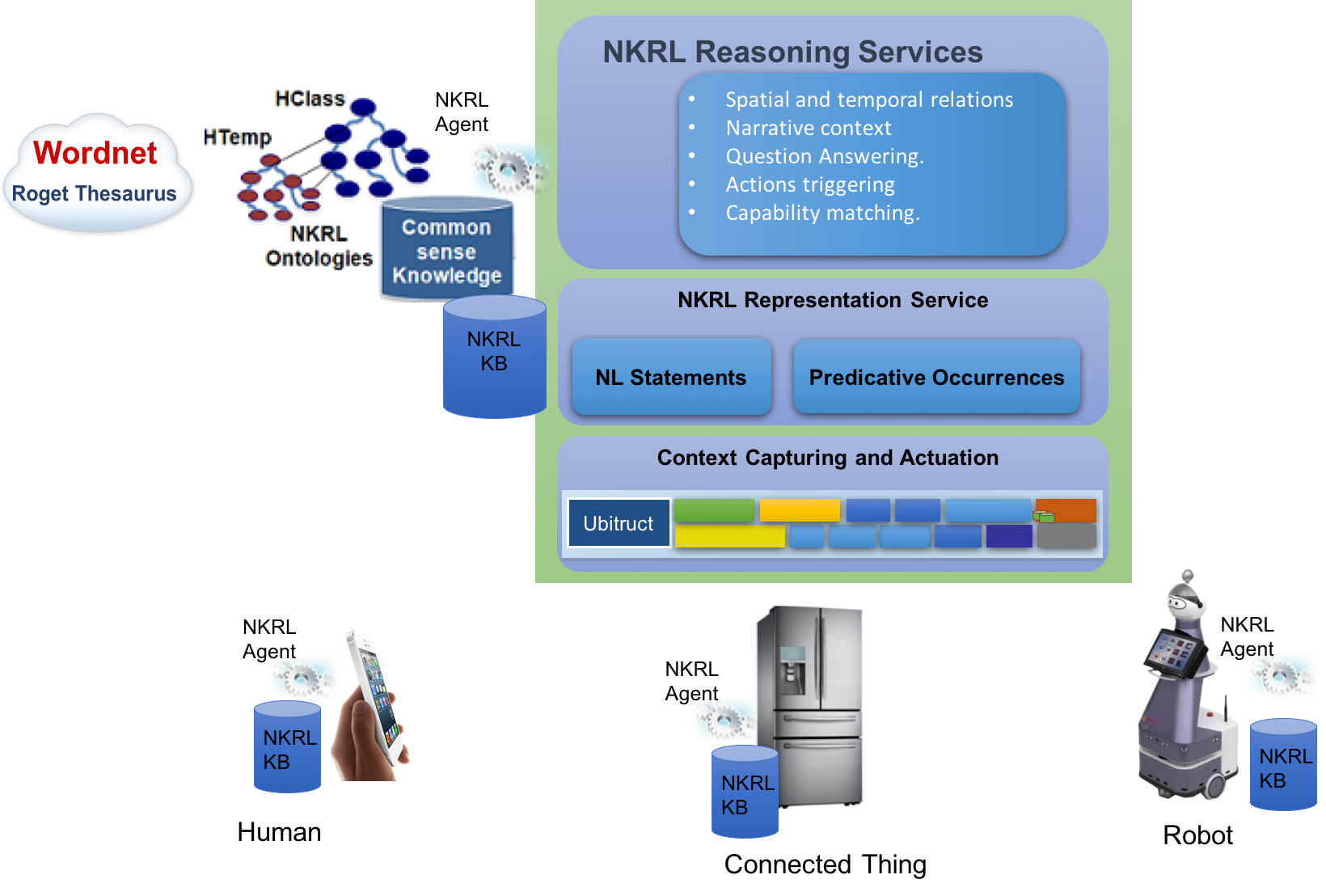

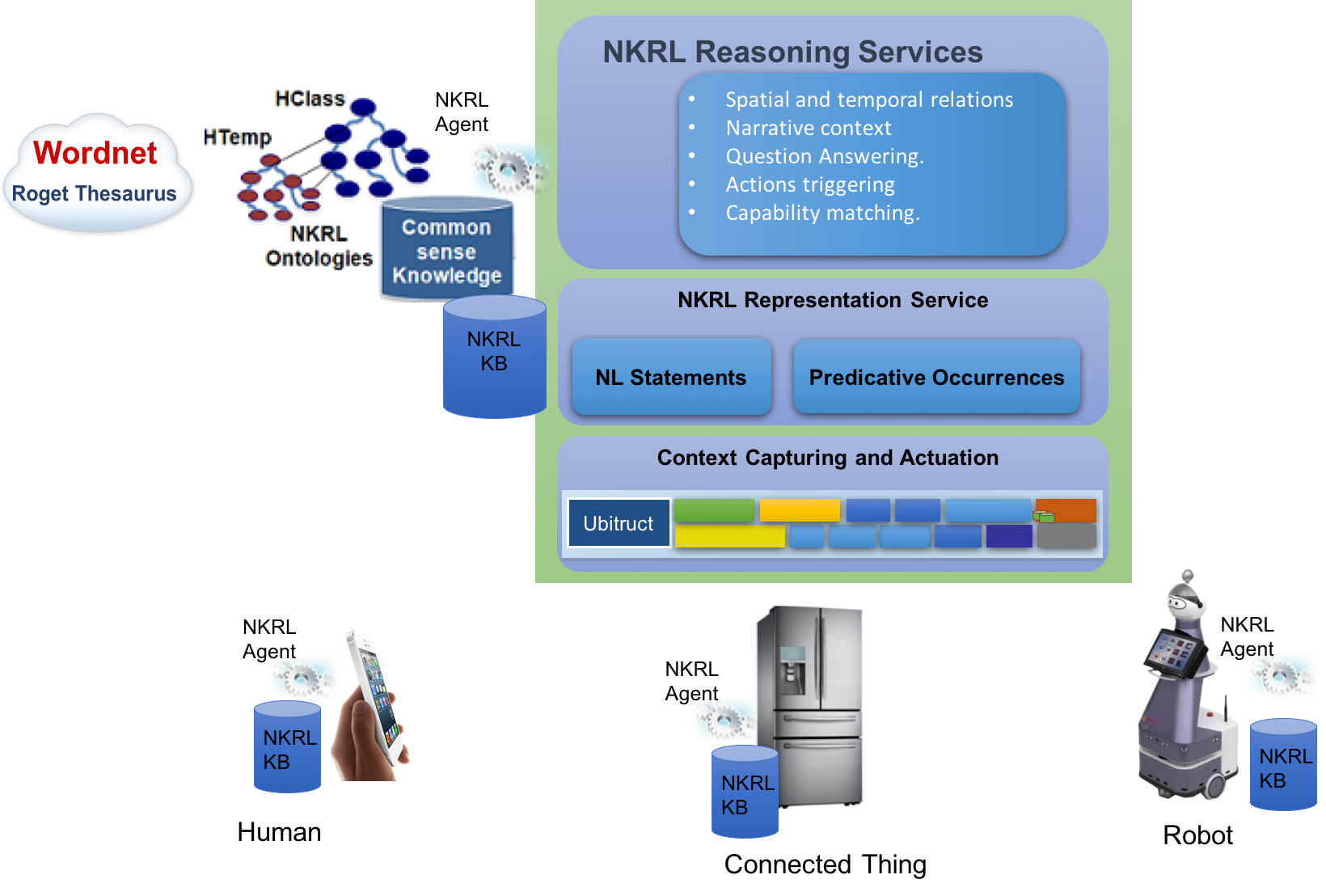

One of the most important issues in the ambient intelligence is creating a unified architecture to integrate and manage context information from heterogeneous information sources such as environmental web services, sensors, robots and humans, specifically the integration at the representation level. O-Smart NKRL is a cognitive architecture proposed as a framework for designing and operating assistive agents. This architecture is composed of three main layers.

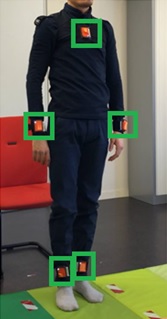

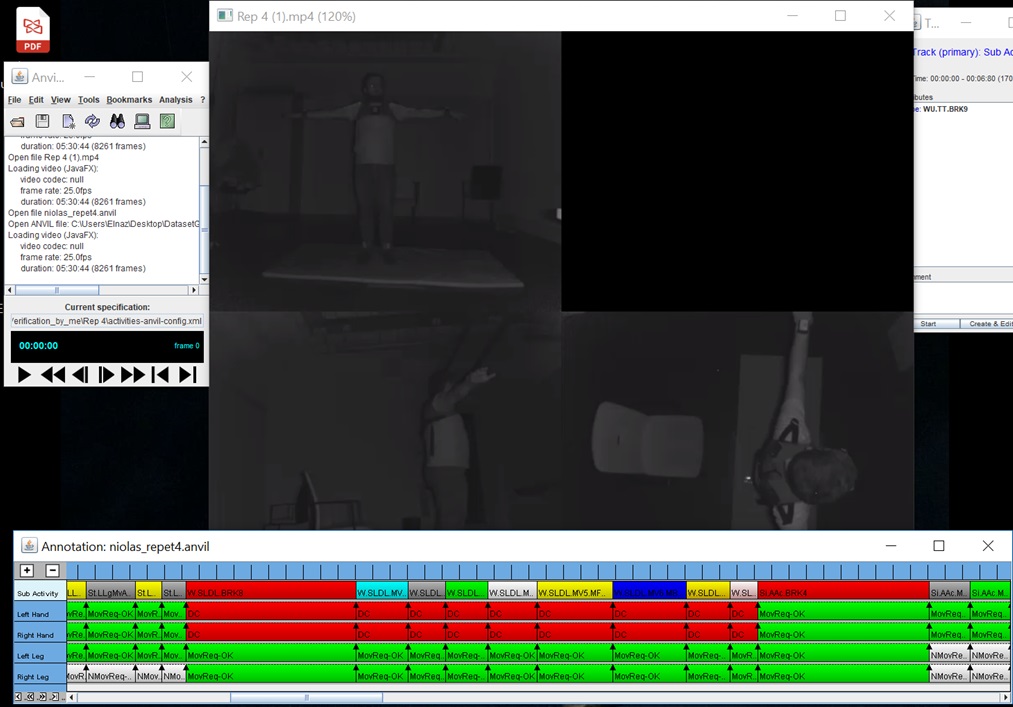

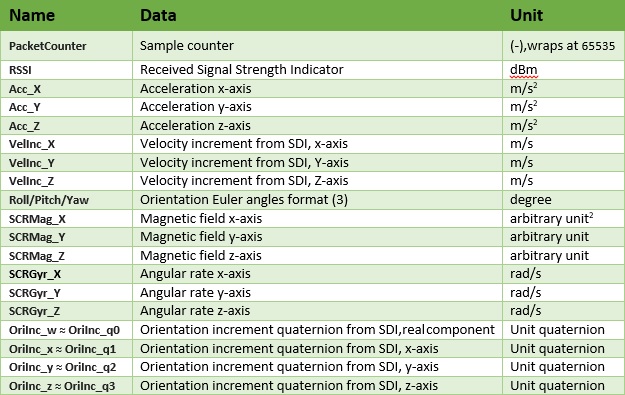

At the low level, the communication and verbalization layer is composed of modules for interfacing with smart perception systems that can capture relevant context data such as object in the scene, RFID Tags, Beacons, Human Speech, etc.

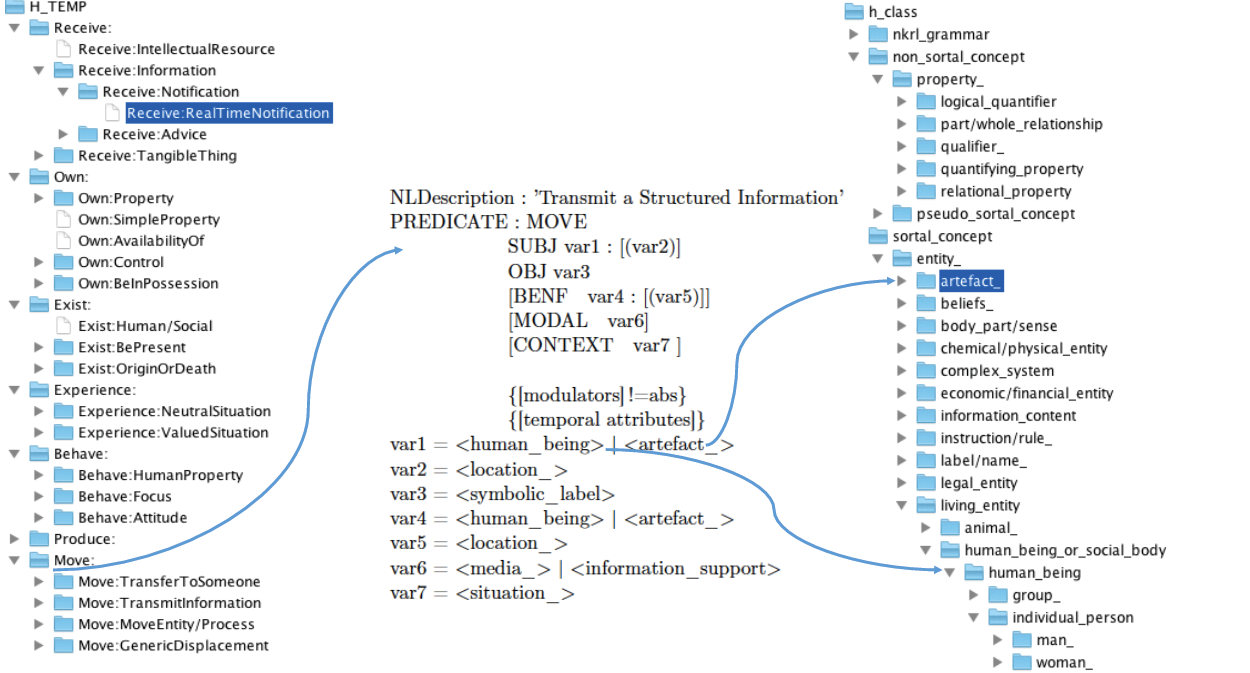

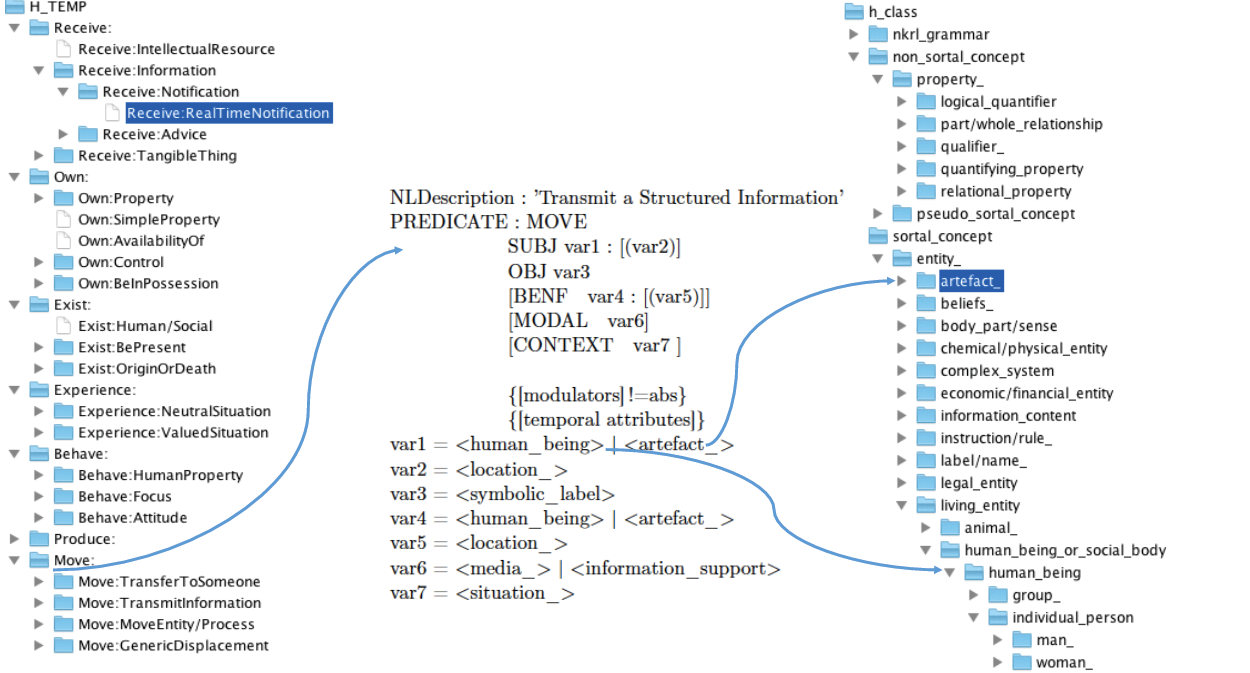

At the middle level, the Knowledge representation layer offers a service to convert context data into narrative semantic knowledge represented and vice versa semantic knowledge into NL verbalisation or an action that can be executed by an actuator or a robot in the real-world. The semantic knowledge is represented by using the knowledge representation language (NKRL), which is a uniform representation tool based on two main ontologies to describe semantically both "static" and "dynamic" entities.

• HClass Ontology: is used to describe static entities. It is a taxonomy of entities (concepts and individuals) linked by the is-subclass-of or is-instance-of relation similarly to any OWL ontology.

• HTemp Ontology : is used to describe static entities corresponding to any state, situation, event, interaction or change. These are narrative information describing what is happening in the environment. The HTemp ontology provides a set of generic templates for representing these dynamic entities. These templates are defined by using the notions of ”conceptual predicate” and ”functional roles” see references bellow for more details.

The representation service create NKRL predicates occurrences and save them in a shared knowledge base, which can be queried by agents using NKRL queries. We denote four kinds of predicatives occurences.

• Spatio-Temporal Descriptions of the entities populating the ambient environment (human, robots, devices, etc).

• Event and Fluents to describe what is going on in the environment such as state changes, actions, activities, emotions, etc.

• Human and Robotic Agents dialogues

• Actions

At the high-level, the reasoning layer offers the following reasoning services:

• Inference of spatial and temporal relations between entities.

• Inference of the narrative context by analysing and linking relevent implicit relations between predicative occurrences.

• Question Answering.

• Actions triggering based on preferences.

• Capability matching.

These services are implemented by using two kinds of NKRL inference rules:

NKRL transformation or hypothesis rules. These rules are processed by the NKRL Unification Filtering Module and Production Inference Engine. The FUM operates on predicative occurrences stored in the knowledge base by means of search patterns.

References:

H. Abdelkawy, N. Ayari, A. Chibani, Y. Amirat, and F. Attal, "Deep HMResNet Model for Human Activity-Aware Robotic Systems," in Proc. of the AAAI 2018 Fall Symposium Series, Arlington, United States, Oct. 2018. .

H. Abdelkawy, S. Fiorini, A. Chibani, N. Ayari, and Y. Amirat, "Deep CNN and Probabilistic DL Reasoning for Contextual Affordances," in Proc. of the AAAI 2018 Fall Symposium Series, Arlington, United States, Oct. 2018. .

N. Ayari, A. Chibani, Y. Amirat, and G. Fried, "Contextual Knowledge Representation and Reasoning Models for Autonomous Robots," in Proc. of the AAAI 2017 Fall Symposium Series, Arlington, United States, Nov. 2017, pp. 246-253. .

N. Ayari, H. Abdelkawy, A. Chibani, and Y. Amirat, "Towards Semantic Multimodal Emotion Recognition for Enhancing Assistive Services in Ubiquitous Robotics," in Proc. Of the AAAI 2017 Fall Symposium Series, Arlington, United States, Nov. 2017, pp. 2-9. .

N. Ayari, A. Chibani, Y. Amirat, and E. Matson, "A Semantic Approach for Enhancing Assistive Services in ubiquitous robotics," Robotics and Autonomous Systems, Elsevier, vol. 75, pp. 17-27, 2016. .

A. Chibani, A. Bikakis, T. Patkos, Y. Amirat, S. Bouznad, N. Ayari, and L. Sabri, "Using Cognitive Ubiquitous Robots for Assisting Dependent People in Smart Spaces," in Intelligent Assistive Robots- Recent advances in assistive robotics for everyday activities, S. Mohammed and J. C. Moreno and K. Kong and Y. Amirat Eds, Springer Tracts on Advanced Robotics (STAR) series, 2015, pp. 297-316. .

N. Ayari, A. Chibani, and Y. Amirat, "Semantic Management of Human-Robot Interaction In Ambient Intelligence using N-ary ontologies," in ICRA 2013, Karlsruhe, Germany, May. 2013, pp. 1164-1171. .

N. Ayari, A. Chibani, and Y. Amirat, "A Semantic Approach to Enhance Human-Robot Interaction in AmI Environments," in Human-Agent Interaction, Workshop at IEEE/RSJ International Conference on Intelligent Robots and Systems, IROS 2012, Vilamoura, Portugal, 2012. .

L. Sabri, A. Chibani, Y. Amirat, and G. P. Zarri, "Narrative reasoning for cognitive ubiquitous robots," in Knowledge Representation for Autonomous Robots, Workshop at IEEE/RSJ International Conference on Intelligent Robots and Systems, IROS 2011, San Fransisco, United States, 2011. .

L. Sabri, A. Chibani, Y. Amirat, and G. P. Zarri, "Semantic and architectural approach for Spatio-Temporal Reasoning in Ambient Assisted Living," in 22th International Joint Conference on Artificial Intelligence, IJCAI 2011, Barcelona, Spain, 2011, pp. 77-84. .