Daily Physical Activities Dataset

Dataset Information:

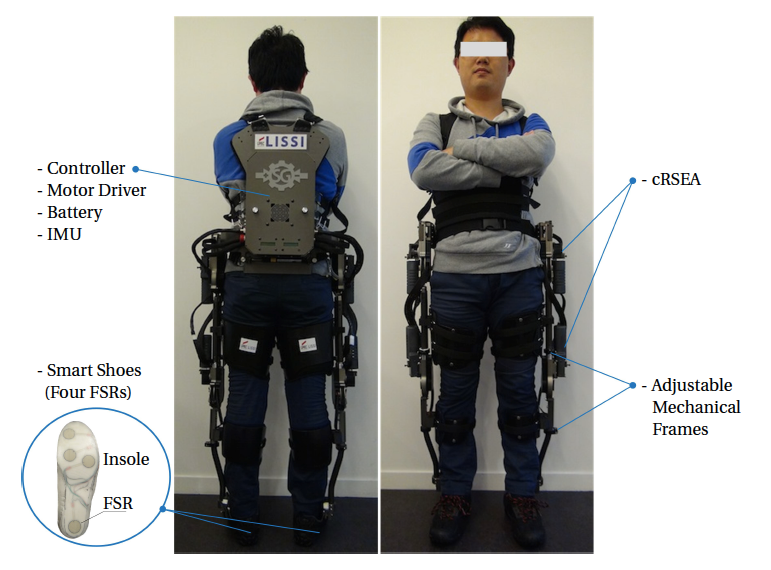

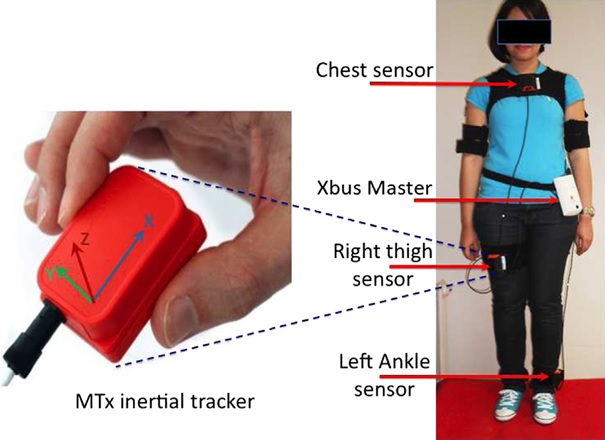

The sensors used for data acquisition consisted of three MTx 3-DOF inertial trackers developed by Xsens Technologies. Each MTx unit includes a tri-axial accelerometer measuring the acceleration in the 3-dspace (with a dynamic range of 75g where g represents the gravitational constant).The sensor's placements is chosen to represent the human body motion while guaranteeing less constraint and better comfort for the wearer as well as its security. The sensors were placed at the chest, the right thigh and the left ankle respectively as shown in Fig. 1. The points near the hip and torso exhibit a 6g range in acceleration.

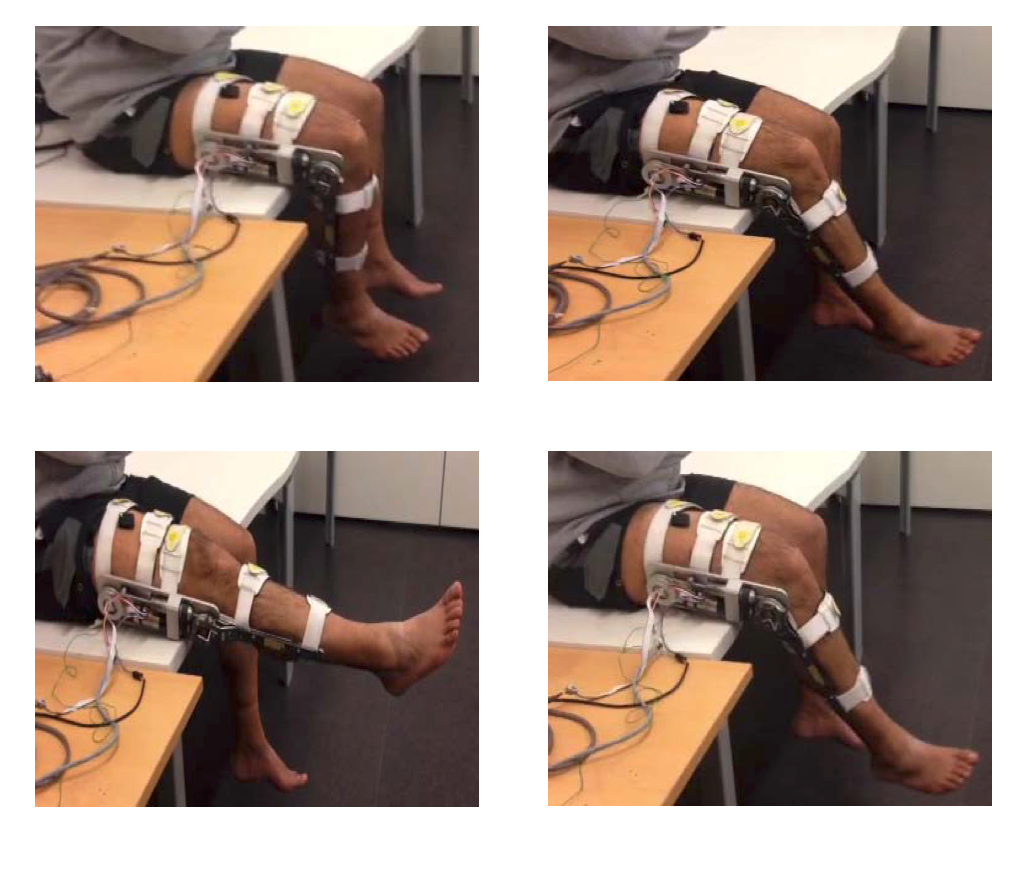

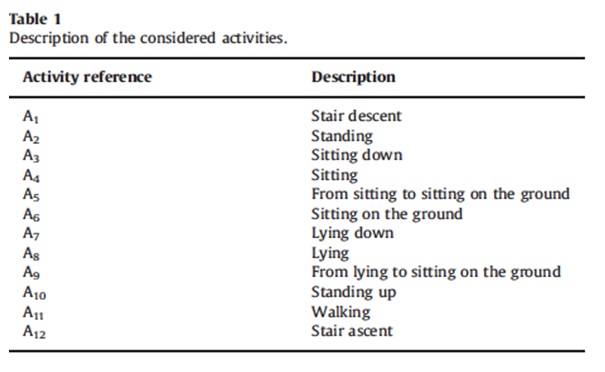

Our experiences show also that the measured ankle-sensor accelerations during the different activities do not exceed the limit of +-5g. The sampling frequency is set to 25Hz, which is sufficient and larger than 20Hz the required frequency to assess daily physical activity. The sensors were fixed on the subjects with the help of an assistant before the beginning of the measurement operation. Sensors placement is chosen to represent predominantly upper-body activities such as standing up, sitting down, etc. and predominantly lower body activities such as walking, stair ascent, stair descent, etc. To secure each MTx unit in place, specific straps are used. This combination allows for efficient inter-subject transfer. The MTx units are connected to a central unit called Xbus Master which is attached to the subject's belt. Raw acceleration data are collected over time when performing the activities and the data transmission between units and the receiver is carried out through a Bluetooth wireless link. The experiments were conducted at the LISSI Lab/University of Paris-Est Créteil. (UPEC) by six different healthy subjects of different ages (who are not the researchers) in the office environment. In order to gather representative dataset, the recruited volunteer’s subjects have been chosen in a given marge of age (25–30) and weight (55–70) kg. Activity labels were estimated by an independent operator. Data are stored on a file and acceleration signals are analyzed using MATLAB software. Twelve activities and transitions were studied and are shown in Table1 and some of these activities are illustrated on Fig. 2.

The activities were chosen to have an appropriate representation of everyday activities involving different parts of the body. The recognized activities and transitions differ in duration and intensity level. The subjects were asked to perform the activities in their own style and were not restricted on how the activities should be performed but only with the sequential activities order. Note that the activities A3, A5, A7, A9 and A11 represent dynamic transitions between static activities. For the three sensor units, each unit being a tri-axial accelerometer, a 9-dimensional acceleration time series are recorded overtime for each activity. The time series present regime changes overtime, in which each regime is associated to an activity.

For any questions and/or comments on this dataset, please contact us by filling the form below